Overview

Abstract

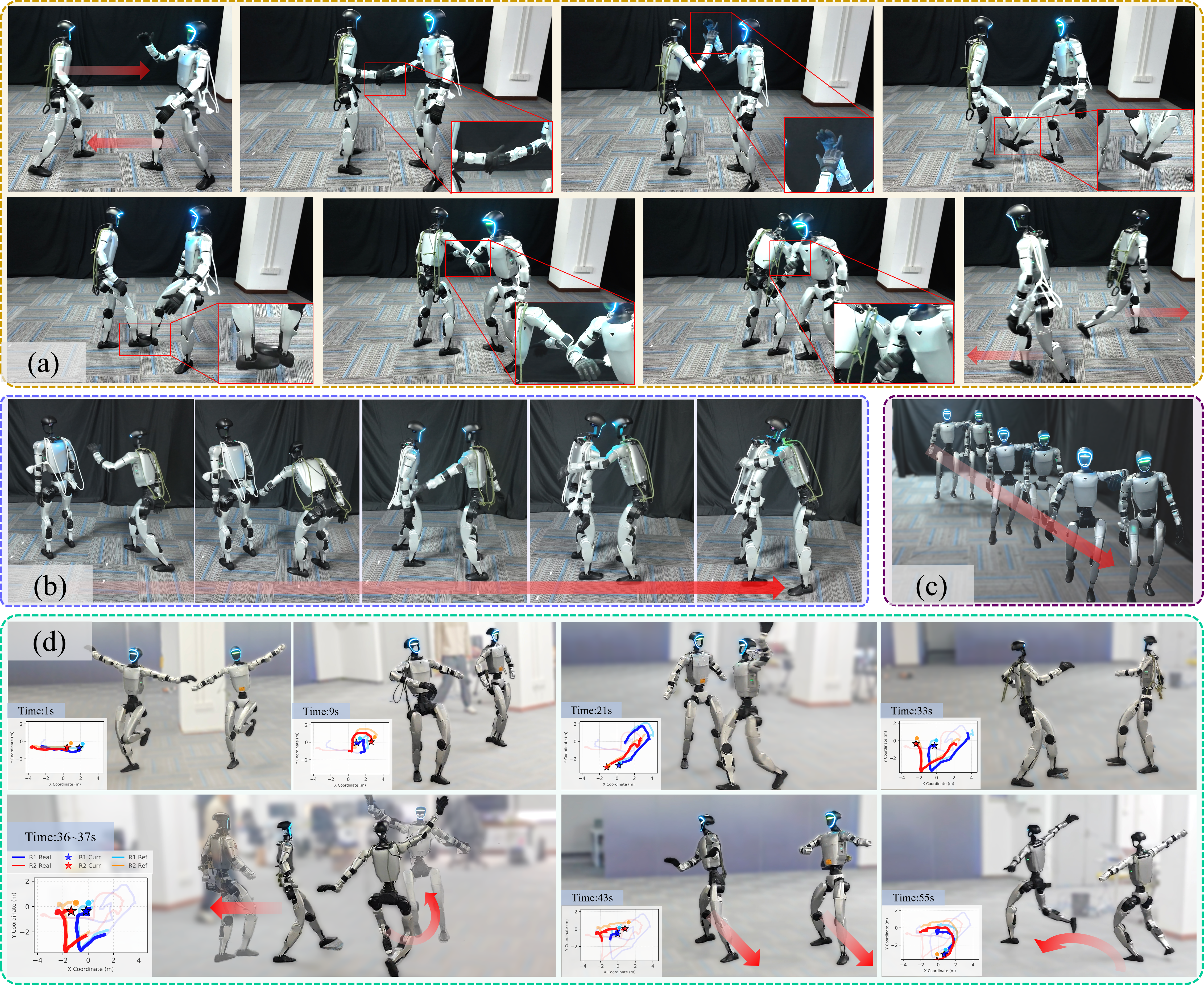

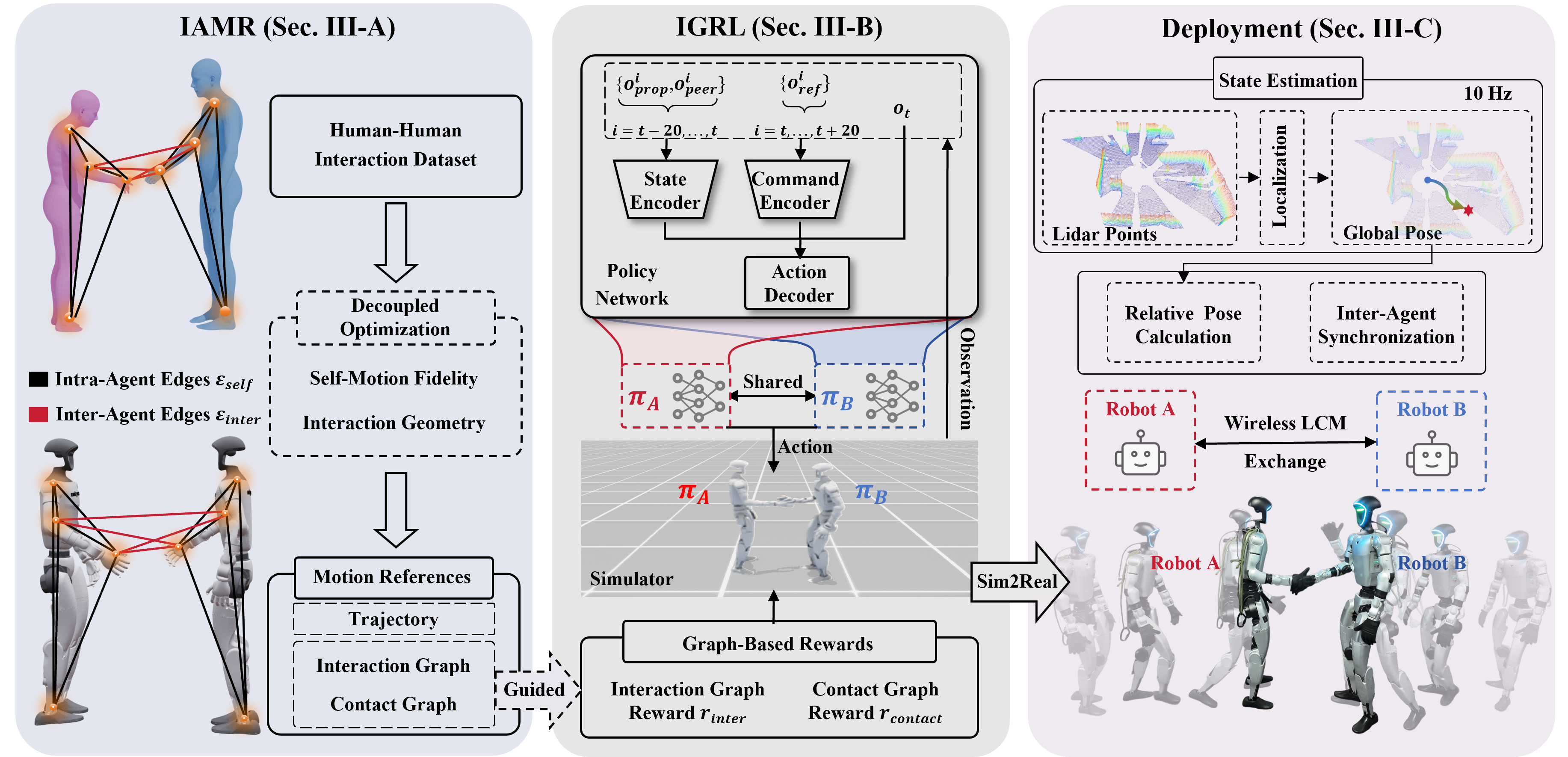

Realizing interactive whole-body control for multi-humanoid systems is critical for unlocking complex collaborative capabilities in shared environments. Although recent advancements have significantly enhanced the agility of individual robots, bridging the gap to physically coupled multi-humanoid interaction remains challenging, primarily due to severe kinematic mismatches and complex contact dynamics. To address this, we introduce Rhythm, the first unified framework enabling real-world deployment of dual-humanoid systems for complex, physically plausible interactions. Our framework integrates three core components: (1) an Interaction-Aware Motion Retargeting (IAMR) module that generates feasible humanoid interaction references from human data; (2) an Interaction-Guided Reinforcement Learning (IGRL) policy that masters coupled dynamics via graph-based rewards; and (3) a real-world deployment system that enables robust transfer of dual-humanoid interaction. Extensive experiments on physical Unitree G1 robots demonstrate that our framework achieves robust interactive whole-body control, successfully transferring diverse behaviors such as hugging and dancing from simulation to reality.

Demos

Coordinated Interaction

La La Land

Contact-Rich Interaction

Greeting

Hug

Shoulder to Shoulder

Robustness to Disturbances

Framework

Our main contributions are summarized as follows:

- Rhythm Framework: We propose the first unified framework for whole-body dual-humanoid interaction that achieves the first successful robust transfer of complex interactive behaviors to physical hardware.

- IAMR & MAGIC Dataset: We develop IAMR (Interaction-Aware Motion Retargeting) to resolve kinematic conflicts and release MAGIC (Multi-Humanoid Geometric Interaction Dataset), offering paired raw and retargeted interaction data.

- IGRL Policy: We introduce IGRL (Interaction-Guided Reinforcement Learning), a multi-agent learning module that masters coupled interaction dynamics via graph-based rewards, enabling agents to learn robust and physically consistent behaviors.

- Extensive Validation: We conduct extensive experiments on Unitree G1 humanoids. Quantitative and qualitative evaluations across diverse interaction tasks in both simulation and the real world demonstrate the superior performance and robustness of our framework.

BibTeX citation

If you find our work useful, please consider citing our paper:

@article{chen2026rhythm, title={Rhythm: Learning Interactive Whole-Body Control for Dual Humanoids}, author={Hongjin Chen and Wei Zhang and Pengfei Li and Shihao Ma and Ke Ma and Yujie Jin and Zijun Xu and Xiaohui Wang and Yupeng Zheng and Zining Wang and Jieru Zhao and Yilun Chen and Wenchao Ding}, journal={arXiv preprint arXiv:2603.02856}, year={2026}, url={https://arxiv.org/abs/2603.02856}}